Qwen3.5 Medium Matches Sonnet 4.5 on Local Hardware

Qwen3.5-27B/35B open-source, runs local on 18-24GB VRAM. Benchmarks rival Claude Sonnet 4.5, 1M ctx, tool-native.

Qwen3.5-27B/35B open-source, runs local on 18-24GB VRAM. Benchmarks rival Claude Sonnet 4.5, 1M ctx, tool-native.

Alibaba’s Qwen 3.5 boosts multimodal and agent skills, narrowing the gap with US AI leaders while staying efficient through a sparse MoE design.

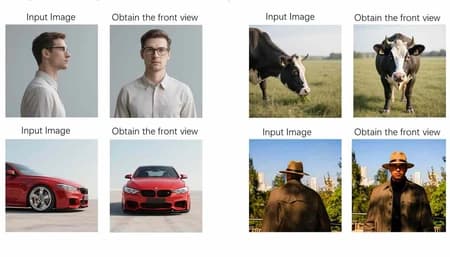

Qwen Image 2.0 unifies generation and editing with sharper 2K visuals, better humans and typography that finally makes text‑heavy designs workable.

Cinematic portrait: gentle Rembrandt key, contour backlight, and balanced orange‑teal tones enhance mood and realism.

An 80B MoE model with only 3B active params, Qwen3 Coder Next aims for high coding performance while remaining practical on consumer hardware.

Qwen‑Image‑2512 a serene studio portrait in Rembrandt light - quiet poise, soft shadows, and luminous realism shaped by subtle depth and tone.

Alibaba’s reasoning model pairs test‑time scaling with web search and code tools, topping Gemini 3 Pro and GPT‑5.2 on HLE search and math benchmarks.

Chinese open source AI like DeepSeek and Qwen is winning US clients by mixing solid performance with flexibility and lower operating costs.

Qwen‑Image‑2512 blends grace and grit: a ballerina in leather moves through castles, lofts, and icy cities with stunning realism

Qwen‑Image‑2512 blends grace and grit: a ballerina in leather moves through castles, lofts, and icy cities with stunning realism

Qwen-Image-2512 offers open source, Apache licensed image generation that challenges Google’s Nano Banana Pro for flexible, enterprise ready workflows.

As enterprises discover capable open models they can run themselves, the pricing power of closed US platforms starts to look increasingly fragile.

Qwen-Image-Layered splits flat images into editable RGBA layers, enabling consistent, Photoshop-like edits with precise control over each element.

Qwen-Image-Edit adds precise bilingual text, semantic, and appearance editing via dual encoding accessible via Chat, API, Hugging Face, and open-source

Alibaba’s Qwen3‑Coder: 480 B MoE model (35 B active), agentic coding AI with massive context window, matches GPT‑4/Claude, fully open‑source.

All 15 posts loaded