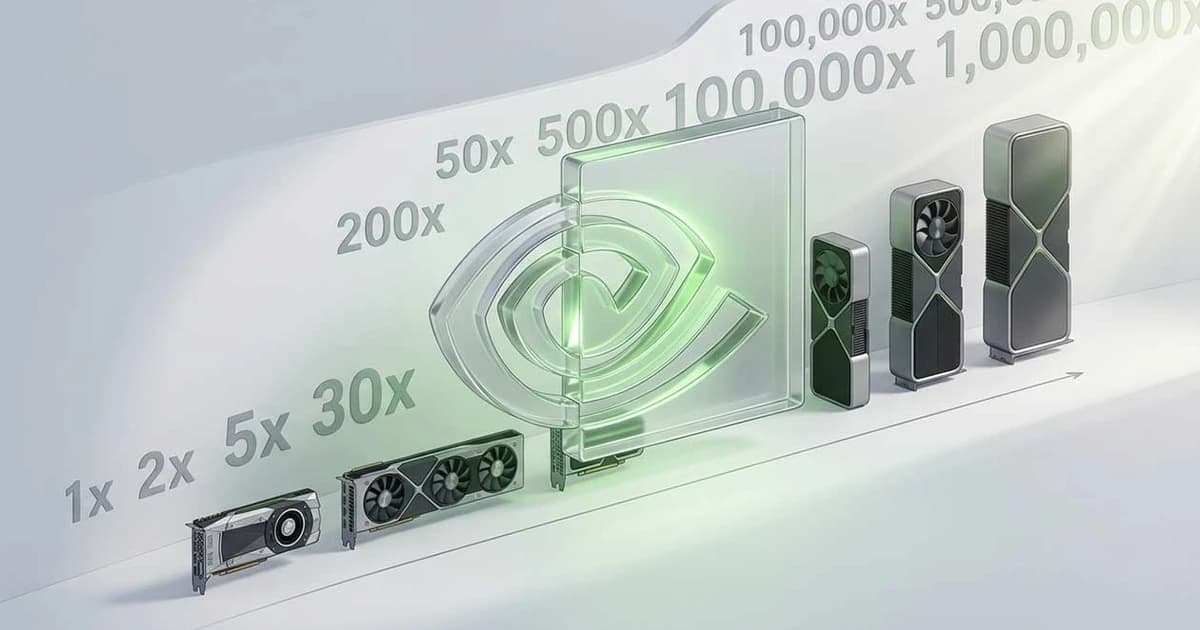

At GDC 2026, Nvidia put a bold number on stage: future gaming GPUs delivering up to 1,000,000 times better path tracing performance compared to the old Pascal based GTX 10 series, with the claim that current RTX generations already reach roughly 10,000x in specific path traced workloads. John Spitzer, Nvidia’s VP for developer and performance technology, framed this as the route to real time graphics that look indistinguishable from reality, but openly admitted that Moore’s Law on its own will not get us there. Instead, the roadmap leans heavily on dedicated RT and Tensor cores plus neural rendering techniques such as DLSS, DLSS 4.5, Multi Frame Generation 6x and new RTX toolkits that generate most on screen pixels and even extra frames using trained models.

Technically, this “million times” leap is not about a single massive monolithic shader engine, but about stacking several layers of efficiency: hardware RT units for fast ray traversal, smarter sampling schemes like ReSTIR, and new scene structures like RTX Mega Geometry that compress and reorganize huge BVHs so that dense environments with trillions of triangles remain GPU cache friendly. The Witcher 4 tech demo cited by Nvidia, with over two trillion triangles path traced in real time, is meant to show how far that combination can go when geometry handling and reconstruction are tuned together. On top of that, Nvidia expects more and more “on device AI” for game logic and interaction, from DLSS style image models to local speech and behavior models that power in game assistants and NPCs.

From a balanced point of view, a lot of this is both real progress and marketing spin. Comparing RTX hardware with fully accelerated path tracing against a Pascal GPU that had no RT cores inflates the 10,000x headline, and projecting another 100x on top to arrive at a million is based on a mix of better silicon, much heavier AI reconstruction and looser definitions of what counts as “path traced”. For players, the upside is obvious: more cinematic lighting, higher effective frame rates and likely more games adopting full path tracing by default rather than as a curiosity mode. The trade off is that the raw, “every pixel physically simulated” ideal is quietly being replaced by a hybrid pipeline where AI generates most of what you see, and where availability, cost and vendor lock in may matter just as much as teraflops in deciding who actually enjoys that future.