US export controls on advanced AI hardware have forced China to accelerate its own chip ecosystem, turning Huawei into the flagship player in this push for technological self‑reliance. Huawei’s Ascend line, especially the 910B/910C and newer 950‑series accelerators, now powers domestic AI clusters and national cloud projects where Nvidia silicon was previously dominant. Benchmarks and statements from Chinese industry show that Ascend 910B can reach roughly 80 percent of an Nvidia A100’s efficiency in training large language models, and in some specific tests it even outperforms A100. At the rack and supercomputer scale, Huawei’s Atlas SuperPoD systems deliver strong throughput gains generation over generation, confirming that Huawei is not just taping out chips but building complete AI infrastructure stacks.

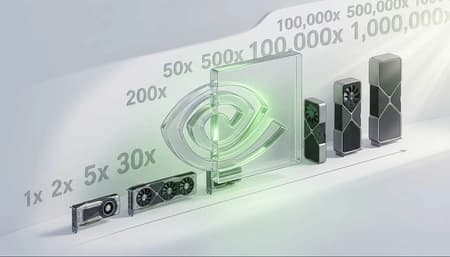

Even so, the gap to Nvidia’s newest architectures remains clear. Nvidia’s Blackwell‑class accelerators deliver several times the peak compute per chip compared with Huawei’s current Ascend 950, and they benefit from more advanced fabrication processes and higher‑efficiency memory systems. Huawei and SMIC are believed to rely on 7 nm‑class nodes without EUV, which makes yields lower and power consumption higher than on Nvidia’s 4 nm TSMC platforms. The second big difference is software. Nvidia’s CUDA ecosystem, libraries and tooling remain the de facto standard for AI research and production worldwide, while Huawei is still building out its CANN and Ascend‑native frameworks, heavily focused on the Chinese market.

So has Huawei “caught” Nvidia? In China‑specific deployments, especially where Nvidia’s best hardware is unavailable, Huawei offers credible alternatives that are good enough to run state‑of‑the‑art AI models, and that is strategically significant. However, looking at cutting‑edge performance, energy efficiency, ecosystem depth and global adoption, Nvidia still sets the pace, with Huawei narrowing the gap rather than overtaking it.